|

12/10/2023 0 Comments Entropy units

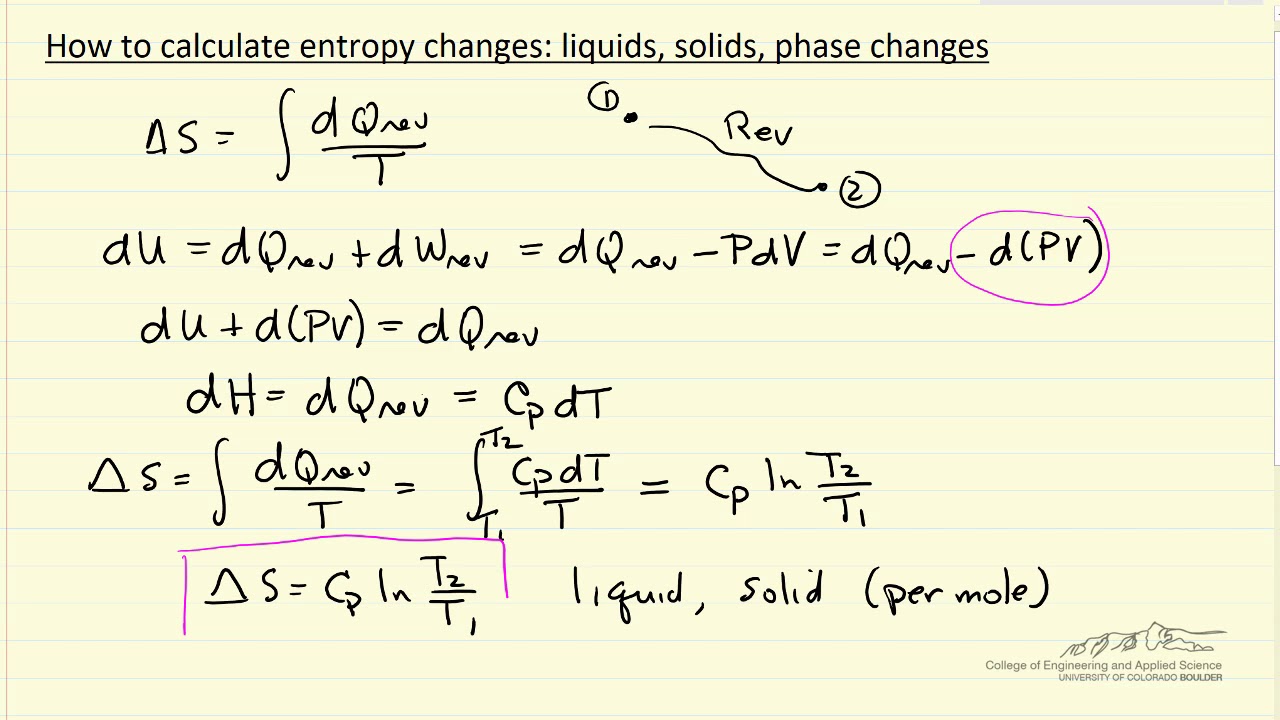

The degree of chaos of the global spatial pattern hidden in the unit for aĬategory. Of a defined topological-based entropy, called feature entropy, which measures Unit status is indicated via the calculation Novel method for quantitatively clarifying the status of single unit in CNN Unit of Entropy (ue, eu) has a dimension of ML2T-2Q-1N-1 where M is mass, L is length, T is time, Q is temperatur, and N is amount of substance. Entropy Definition Entropy is the measure of the disorder of a system. According to the second law of thermodynamics, the entropy of a system can only decrease if the entropy of another system increases. Entropy can have a positive or negative value. It is still challenging to reliably give a general indication of unit status,Įspecially for units in different network models. It is denoted by the letter S and has units of joules per kelvin. Understanding the mechanism of convolutional neural networks (CNNs). The entropy change of a system during a process can be calculated: (kJ/K) 2 1 int, 2 1 T rev Q S S S To perform this integral, one needs to know the relation between Q and T during the process. Plot the spectral entropy of the signal, using time-point vector t and the form which returns se and associated time te. Production Program ti During the first period we lower the production of the consumption good by one unit by reducing the amount of labor in process R1 by. ISBN: 9780262120807.Download a PDF of the paper titled Quantitative Performance Assessment of CNN Units via Topological Entropy Calculation, by Yang Zhao and Hao Zhang Download PDF Abstract: Identifying the status of individual network units is critical for Entropy per unit mass is designated by s (kJ/kg.K). “ Where Do We Stand on Maximum Entropy? (PDF - 2.5 MB)” In The Maximum Entropy Formalism. For a reaction to be feasible, the change in entropy should be positive. According to Clausius, the entropy was defined via the change in. “ Information Theory and Statistical Mechanics (PDF).” In Statistical Physics. The units of entropy are always J K -1 mol -1. What is Unit of Entropy Definition Units of Entropy.

Another good explanation, in terms of estimating probabilities of an unfair die is in Jaynes, E. According to the Boltzmann equation, entropy is a measure of the number of microstates available to a system.The philosophy of assuming maximum uncertainty is discussed in Chapter 3 of Tribus, M.Jaynes knew, of course, about Thomas Bayes and when on sabbatical in England sought out and photographed the Bayes tombstone. The units of of energy over temperature (e.g. Jaynes bibliography, list of unpublished works, and book reviews.

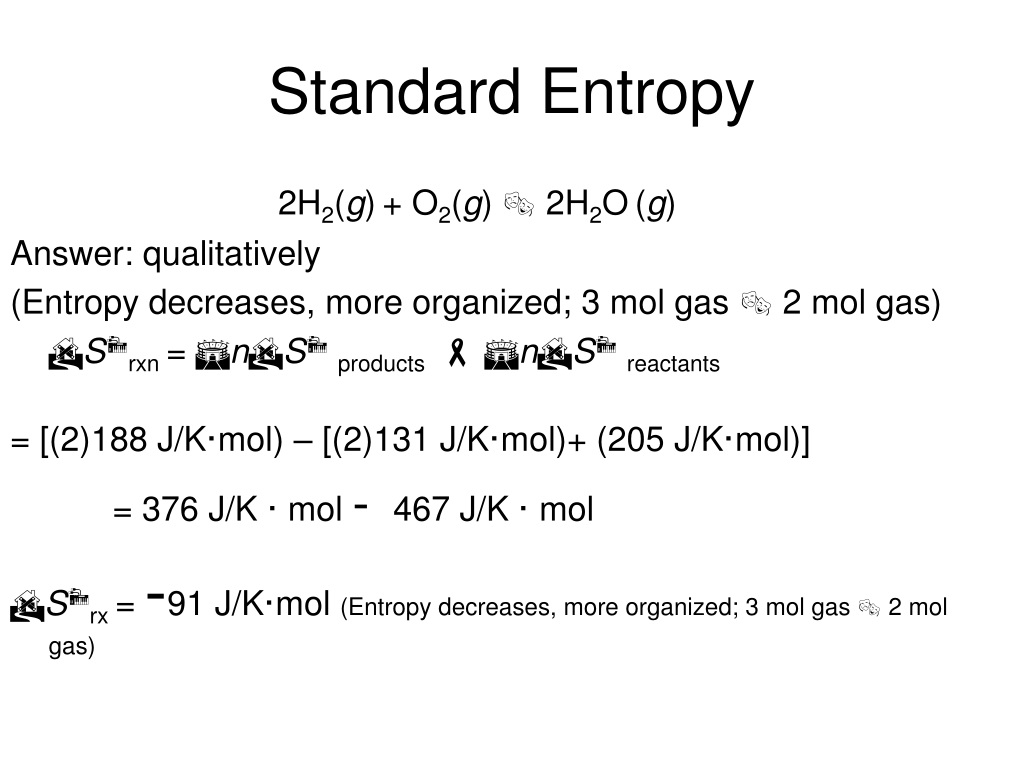

“ Information Theory and Statistical Mechanics II (PDF - 3.6 MB).” Physical Review 108 (October 15, 1957): 171–190. Typical units are joules per kelvin (J/K). Therefore: TS total is a version of Gibbs free energy. This is the seminal paper which really started the modern use of the Principle of Maximum Entropy in physics. Entropy is a measure of the randomness or disorder of a system. The units of entropy are J K-1 mol-1, whilst the units of Gibbs free energy are kJ mol-1.

The units of entropy are kJ/K, and for specific entropy kJ/kg.K. In classical thermodynamics, infinitesimal changes in the Energy U, entropy S, and Volume V of a system are related by. The person most responsible for use of maximum entropy principles in various fields of science is Edwin T. Specific entropy is the entropy per unit mass of a system. As the following example demonstrates, entropy is a property of a system. “ Information Theory and Statistical Mechanics (PDF - 2.1 MB).” Physical Review 106 (May 15, 1957): 620–630. The units of entropy and entropy change are joules per kelvin (J/K).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed